Google has unveiled a novel AI-powered visual search capability that leverages a "query fan-out" method to generate multiple descriptive queries from a single user image, aiming to deliver more comprehensive and relevant search results. This advancement, part of the broader integration of generative AI into Google Search, represents a significant technical shift from traditional single-query image search, potentially enhancing how users discover information about complex real-world objects.

Key Takeaways

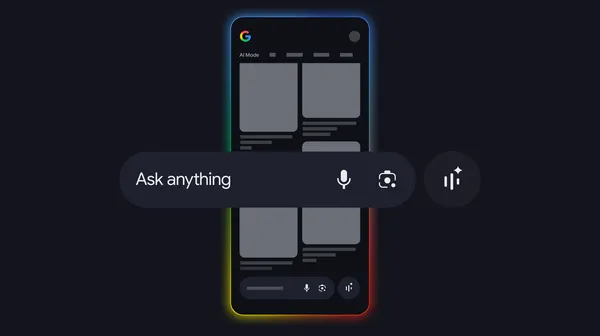

- Google's new AI Mode in Search uses a "query fan-out" technique to analyze a user-uploaded image and automatically generate multiple descriptive text queries.

- The system is designed to provide a more thorough set of search results by covering various aspects of an object (e.g., a plant's species, care instructions, and toxicity) in a single search.

- This feature is an evolution of Google Lens and Multisearch, moving beyond simple visual matching to generative, multi-faceted query understanding.

- The technology is powered by Google's multimodal foundational models, including Gemini, to interpret visual content and generate relevant, diverse search intents.

How AI Mode's Query Fan-Out Transforms Visual Search

At its core, the new AI Mode feature addresses a fundamental limitation of traditional image search. When a user uploads a photo—for example, of an unfamiliar houseplant—conventional systems typically attempt to match it against a known visual index, returning similar images or a single best-guess text result. Google's query fan-out method fundamentally reimagines this process. Instead of one result, the AI model analyzes the image and generates several distinct, complementary text queries that explore different dimensions of the subject.

For instance, an image of a specific chair might fan out into queries like "mid-century modern armchair design history," "where to buy [brand] replica chairs," and "how to reupholster velvet dining chairs." The system then executes these queries in parallel and synthesizes the findings into a unified, AI-generated overview for the user. This approach mimics how a human might interrogate a visual subject with multiple questions to gain a holistic understanding, a process that was previously manual and sequential for the user.

The technology is built upon Google's advanced multimodal AI infrastructure. Models like Gemini, which are trained jointly on text, images, and other data, provide the foundational ability to "understand" the content of the image and reason about what textual information a user might seek. This represents a direct application of generative AI capabilities—traditionally used for creating text or images—to the core search discovery process.

Industry Context & Analysis

Google's query fan-out launch is a strategic move in the intensifying AI search wars, directly countering features from competitors like Microsoft's Copilot (powered by GPT-4) and Perplexity AI. Unlike OpenAI's ChatGPT or Copilot, which primarily handle conversational text prompts, Google is leveraging its strength in computer vision and its vast index of the visual web. While Perplexity has gained traction—reporting over 10 million monthly active users and a $520 million valuation—by focusing on answer generation with citations, Google is integrating this capability directly into the universal entry point of visual search.

Technically, this shift from retrieval to generation is significant. Traditional visual search relies on embeddings and nearest-neighbor lookups in a massive, pre-computed index. Google's new method uses a generative model to create net-new search queries, which are then processed by its standard web search systems. This hybrid approach combines the creative, interpretive power of generative AI with the reliability and scale of traditional web search. The challenge will be ensuring the fanned-out queries are not only diverse but also highly precise; irrelevant or overly broad generated queries could degrade result quality rather than enhance it.

This development follows a clear industry pattern of using AI to decompose complex user intent. It is analogous to the "chain-of-thought" prompting used in large language models to solve reasoning problems, but applied at the query level for search. Google's immense scale gives it a unique advantage: the billions of image searches conducted daily provide an unparalleled dataset to train and refine these multimodal fan-out models. The feature also tightens the integration loop between Google's AI research (Gemini) and its flagship product (Search), creating a more defensible ecosystem as competitors license foundational models from third parties like OpenAI.

What This Means Going Forward

For users, the immediate benefit is a more powerful and intuitive discovery tool. Searching with images becomes a starting point for exploration rather than a simple lookup, reducing the need for multiple, manually crafted follow-up searches. This is particularly valuable for educational, shopping, and DIY use cases where objects are complex and context-dependent.

For the broader search and AI industry, Google's move raises the bar for visual understanding. It pressures competitors to develop similarly sophisticated multimodal agents or risk ceding ground in a critical search modality. We can expect to see rapid iteration, with future versions potentially fanning out into mixed-media queries (combining text and image modifications) or integrating real-time information layers like local inventory or pricing.

The key metrics to watch will be user engagement with the AI-generated overviews and the click-through rate on the provided web links. If successful, this technology will likely proliferate across Google's ecosystem, enhancing products like Google Shopping, Maps, and Assistant. The long-term implication is a continued blurring of the line between search as a database query and search as an AI-powered research assistant, fundamentally changing how we navigate the world's information.